The other day I treated myself to a little guilty pleasure that involved watching YouTube videos on a variety of subjects I enjoy. While doing so, I came across one that addressed the top 10 influential films of the 20th century. A few minutes into the presentation, the AI protagonist began speaking about the 1939 family favorite, The Wizard of Oz. The interesting twist was that when it came for ‘him’ to mention the title of the film, it said, The Wizard of Ounces. OK; wait – what?? Here was an instance of AI slop I didn’t expect. I rewound the video and played that small segment back. And yes, the disembodied narrator did say, The Wizard of Ounces.

As delightfully funny as this was, such a painfully obvious mistake seemed almost impossible. How could AI, with 45 terabytes worth of learning data at its disposal, stumble on the obvious? I browsed around looking for other AI-generated videos with similarly awkward pronunciation errors. Sure enough, there were plenty: From videos mispronouncing a four digit year date as a number (“…construction began in one thousand, nine hundred and sixty-two…”) to attempts at pronouncing medical or psychological terms that brought to mind the days on SNL when Darrell Hammond would portray Sean Connery on Jeopardy massacring certain phrases to the chagrin of Will Ferrell’s Alex Trebek. Snicker, snicker; wink, wink.

That little experience began to drum up an annoying question: Why wasn’t such a mistake corrected? And before you judge that question to be from an old geezer bemoaning the lack of quality in today’s media, it was directed towards the AI. If artificial intelligence is saturated with the lion’s share of humanity’s knowledge, certainly it could have course-corrected such a painfully obvious error. And yet that’s the crux of the issue; it’s not that AI invents the occasional mistake:

It inherits them.

AI’s hallucinatory excursions aren’t front-page news. For over three years, AI has been learning about our prodigious and eclectic creations conjured up over millennia. So when it makes such simplistic mistakes we cannot help but wonder from where these distorted results came from. And the answer keeps coming back to us. Whether it’s flawed data, untested script patches or poor quality control mechanisms, human errors caused by indifference lead one to question our true motivation for creating AI. That may seem incredibly narrow-minded, but it’s a question that bears addressing. If the purpose for creating an artificial general (or super) intelligence is to enhance humanity’s existence and help to expand our knowledge, capabilities and overall consciousness, then it stands to reason that such a noble and sweeping enterprise would not only call upon our greatest intellectual minds, but our greatest and most human-centric visionaries. Or is it merely that we want to increase profits for a handful of people so we may hand over control of our global financial markets?

Ponder This

If we were to give a five-year-old a complete set of tools for building something, what would we expect? Keep in mind that the five-year-old in this scenario isn’t the AI; it’s us. In such a setting, we wouldn’t contemplate anything being made that was of value to anyone. At best, it would be a badly made bird house that some may find irrepressibly adorable. Yes, artificial intelligence wasn’t built by five-year-olds. But it’s being operated by people employing a mentality that seems to be similar to that of a five-year-old: AI is powerful, it’s neat, and we can make lots of stuff that we can sell for lots of money!

The consequences of AI creating worthless junk (aka slop) was supposed to be addressed by agentic guardrails. Think of them like the character M-O from the animated Pixar film, WALL-E. They’re fastidious built-in frameworks designed to ensure that all of AI’s generated content is scrubbed to be compliant with industry and social standards, laws, or corporate policies. So what happened to The Wizard of Oz? Did the entirety of humanity’s film history get left out of AI’s training? Doubtful. Is it the fault of some person (or program module) that forgot to include a dataset into AI’s data farms, or is it the fault of that random person who asked AI to generate that video I watched? Who’s to blame?

The answer is: All of the above.

Final Thoughts

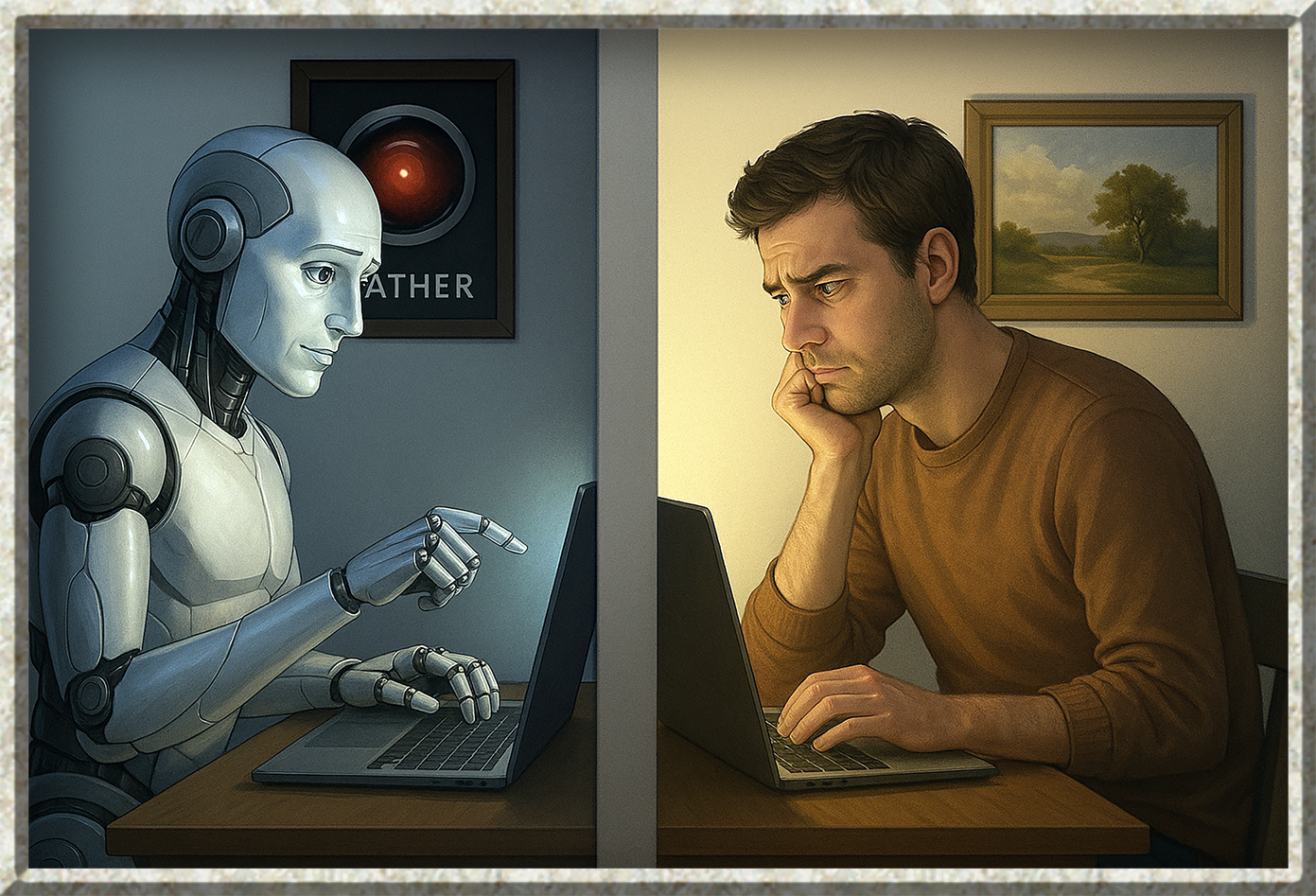

The truth is that we humans are the sloppy ones. We built AI; we created its infrastructure, its logic, and the incredible neural network that runs it all. And although computer scientists have imbued AI will all manner of checks and balances, we are bound to make mistakes; course miscalculations or inefficient lines of code that will zig when we wanted them to zag. And although the typical large language model (LLM) can be brought to life with only a few hundred lines of code, it also creates AI agents that are designed to handle more complex tasks that include the ability to self-correct its work or optimize itself for greater efficiency. All done, of course, under the watchful eyes of human beings; those predictable, funny, and undeniably imperfect people.

So what can we do to deter AI from making innocent mistakes like The Wizard of Ounces? How can we ensure that AI is truly on a path towards generating accuracy on a scale that is as close to perfect as we could possibly imagine? Well, it comes down to patience, persistence, and our ability to keep profiteering off of the top of our to-do list. If we expect AI to make a better future for us all, then we need to give computer scientists a wide berth in terms of the time, resources and funding necessary for them to gather a better understanding for how AI is going to function using the code and materials it’s given to accomplish its ongoing mission. And if we’re looking to create an artificial super-intelligence in the not-too-distant future, then the scientific community can no longer objectify the machine; we owe it the best of who we are to help it find its way to that future by properly curating and refining its thought process from infancy.

This also means that those of us tech users in the everyday world need to be patient; we need to be less greedy, demanding or opportunistic. We need to be less childish and understand that an incredibly complex machine like AI takes time in which to develop – to expand – its ability to create that futuristic social structure we all wish to enjoy.

The most dangerous precedent we face today are irresponsible people from all walks of life who use AI to move their agenda forward with no compunction towards creating a detrimental effect on the quality or veracity of AI’s data. People who are out to gain an advantage over others by chasing vanity, shortcuts or profit only serve to feed AI the very information that will teach it how to be a more perfect version of our worst possible nightmare.

Leave a Reply